Central Motor

Conduction Time (CMCT) is a neurophysiological parameter that measures the time

taken for a motor impulse to travel along the central motor pathways from the

motor cortex to the spinal motor neurons. Here is a detailed explanation of

Central Motor Conduction Time:

1. Definition: CMCT is a

measure of the conduction time through the central nervous system, specifically

along the corticospinal tract, which is responsible for voluntary motor

control. It reflects the integrity and efficiency of the neural pathways

connecting the motor cortex to the spinal cord and peripheral muscles.

2. Methodology:

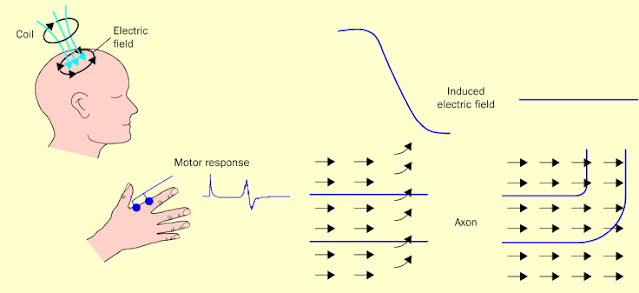

o Stimulation: CMCT is

typically assessed using transcranial magnetic stimulation (TMS) to stimulate

the primary motor cortex and evoke motor responses in the target muscles. The

timing of the motor evoked potentials (MEPs) elicited by TMS is measured to

determine the conduction time from the cortex to the muscles.

o Recording:

Electromyography (EMG) recordings are used to capture the MEPs in the muscles

of interest. By analyzing the onset latency of the MEPs relative to the TMS

pulse, researchers can calculate the CMCT, which includes the time for synaptic

transmission, conduction along the corticospinal tract, and neuromuscular

junction transmission.

3. Significance:

o Motor Pathway

Integrity: CMCT

provides information about the functional integrity of the central motor

pathways, including the corticospinal tract. Prolonged CMCT may indicate

disruptions or abnormalities in the neural conduction along these pathways,

which can be associated with neurological conditions affecting motor function.

o Diagnostic Value: Changes in CMCT

can be observed in various neurological disorders, such as multiple sclerosis,

motor neuron diseases, and stroke. Monitoring CMCT alterations can aid in

diagnosing and monitoring disease progression, as well as assessing the effects

of therapeutic interventions.

4. Clinical

Applications:

o Neurological

Disorders: CMCT

measurements are used in clinical neurophysiology to evaluate motor pathway

function in patients with neurological conditions affecting the central nervous

system. Abnormal CMCT values can indicate underlying pathology and help guide

treatment decisions.

o Research: CMCT

assessments are also valuable in research settings to investigate motor system

physiology, plasticity, and adaptations in response to interventions such as

rehabilitation, pharmacological treatments, or neurostimulation techniques.

Studying CMCT can provide insights into motor control mechanisms and neural

plasticity.

In summary,

Central Motor Conduction Time is a neurophysiological parameter that assesses

the conduction time along the central motor pathways from the motor cortex to

the muscles. By measuring CMCT, clinicians and researchers can evaluate the

integrity of the corticospinal tract, diagnose neurological disorders affecting

motor function, and monitor changes in motor pathway function over time.

Comments

Post a Comment