The relationship between experience and the brain is

characterized by dynamic patterns of change that reflect the impact of

environmental stimuli, learning, and behavioral interactions on neural

structure and function. Here are key patterns of change in the relationship

between experience and the brain:

1. Sensitive Periods:

o Early Development: Experience plays a crucial role during sensitive periods in early

development when the brain is highly responsive to environmental input. These

critical periods are characterized by rapid and efficient learning, such as

language acquisition, sensory processing, and social interactions.

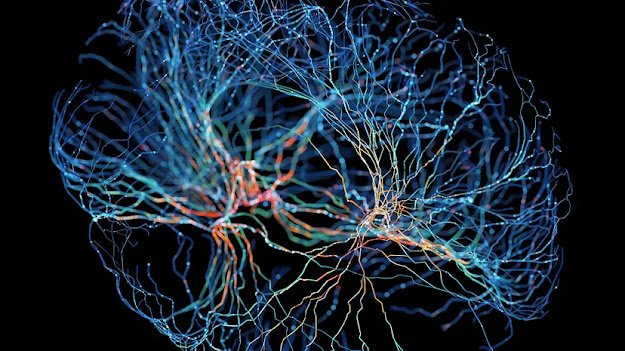

o Neural Plasticity: During sensitive periods, the brain exhibits heightened neural

plasticity, allowing for the formation of synaptic connections and neural

circuits in response to specific experiences. This plasticity enables the brain

to adapt to environmental stimuli and optimize cognitive development.

2. Experience-Expectant vs. Experience-Dependent

Processes:

o Environmental Information: Experience- expectant processes involve storing

environmental information that is expected to be present in the typical

environment, such as motion or visual contrasts. These processes rely on the overgeneration

of synaptic connections early in life, with synaptic pruning refining

connections based on experience.

o Individual Learning: Experience-dependent processes store information

specific to the individual, such as the location of resources or personal

experiences. These processes involve the formation of new synaptic connections

in response to unique learning occasions, allowing for individualized

adaptations based on personal experiences.

3. Neural Reorganization:

o Synaptic Pruning: Experience shapes the developing brain through synaptic pruning, where

unused or less relevant synaptic connections are eliminated while strengthening

and maintaining connections that are frequently activated. This process refines

neural circuits and optimizes brain function based on experience.

o Adaptive Changes: Neural reorganization in response to experience allows the brain to adapt

to changing environmental demands and learning opportunities. The formation of

new synaptic connections and the refinement of existing circuits support

adaptive behaviors and cognitive flexibility.

4. Lifelong Learning:

o Continual Impact: Throughout life, experiences continue to influence brain structure and

function, contributing to ongoing learning and cognitive development. Learning

new skills, acquiring knowledge, and engaging in novel experiences can lead to

structural changes in the brain at any age.

o Cognitive Health: Active engagement with the world mentally and physically promotes

cognitive health and neurological well-being in later stages of life. Lifelong

learning and cognitive stimulation support brain plasticity, resilience, and

cognitive vitality across the lifespan.

Understanding the patterns of change in the

relationship between experience and the brain highlights the dynamic nature of

neural development, the role of environmental influences in shaping brain

structure, and the lifelong impact of experiences on cognitive function and

behavioral adaptation. These patterns underscore the importance of enriched

environments, learning opportunities, and social interactions in promoting

healthy brain development and cognitive well-being.

Comments

Post a Comment